influxdb - go

https://docs.influxdata.com/influxdb/v2.4/organizations/

mac:

brew install influxdb

influxd run

配置文件: /usr/local/etc/influxdb2/config.yml

概念

influxdb是一个时序数据库

org:用户空间

database/bucket:数据库

measurement:数据表

point:数据行

measurement:指标名

time:时间

field:指标值

tags:属性

series:一系列数据的集合,在一个database里面,retention policy\measurement\tags sets完全相同的数据属于一个series,在物理存储上连续存储

go -influxdb 2.x

get github.com/influxdata/influxdb-client-go

https://github.com/influxdata/influxdb-client-go

package main

import (

"context"

"fmt"

"time"

influxdb2 "github.com/influxdata/influxdb-client-go"

"github.com/sirupsen/logrus"

)

//生产环境可别这么干哦

var (

token = "RTqC84IwjDwG4Lb6G4DCdxT9w3padfGzsQM03gnq6-nt-_O6l-d7UHvGdG96r-sD9fySvNlYAPM0OARVbXzyTA=="

)

func conninflux() influxdb2.Client {

cli := influxdb2.NewClient("http://127.0.0.1:8086", token)

return cli

}

func queryinf(cli influxdb2.Client) {

//org

queryAPI := cli.QueryAPI("project1")

// 数据库,范围,指标

result, err := queryAPI.Query(context.Background(), `from(bucket:"pro1")|> range(start: -1h) |> filter(fn: (r) => r._measurement == "pro1")`) //ctx context.Context, query string

if err == nil {

// Use Next() to iterate over query result lines

for result.Next() {

// Observe when there is new grouping key producing new table

if result.TableChanged() {

fmt.Printf("table: %s\n", result.TableMetadata().String())

}

// read result

fmt.Printf("row: %s\n", result.Record().String())

}

if result.Err() != nil {

fmt.Printf("Query error: %s\n", result.Err().Error())

}

}

}

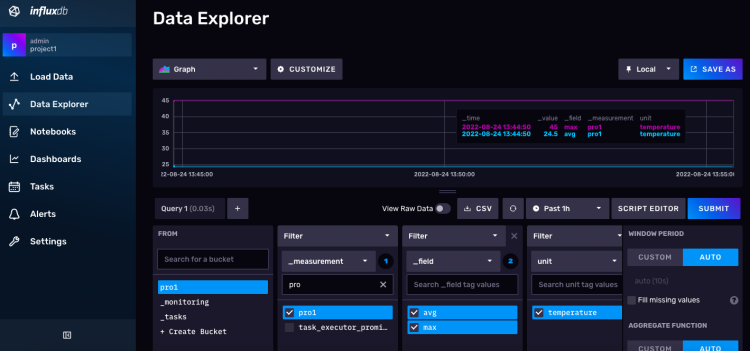

func writeapi(cli influxdb2.Client) {

// Use blocking write client for writes to desired bucket

writeAPI := cli.WriteAPIBlocking("project1", "pro1")

//measurement string, tags map[string]string, fields map[string]interface{}, ts time.Time

//指标名,标签,指标值,时间

p := influxdb2.NewPoint("pro1",

map[string]string{"unit": "temperature"},

map[string]interface{}{"avg": 24.5, "max": 45},

time.Now())

//非阻塞异步写入

err := writeAPI.WritePoint(context.Background(), p)

if err != nil {

logrus.Warn("Failed to inject")

} else {

logrus.Info("Success")

}

// Or write directly line protocol

// line := fmt.Sprintf("stat,unit=temperature avg=%f,max=%f", 23.5, 45.0)

// writeAPI.WriteRecord(context.Background(), line)

}

func main() {

cli := conninflux()

writeapi(cli)

queryinf(cli)

}

//go run ./influxdb.go

//INFO[0000] Success

//table: col{0: name: result, datatype: string, defaultValue: _result, group: false},col{1: name: table, datatype: long, defaultValue: , group: false},col{2: name: _start, datatype: dateTime:RFC3339, defaultValue: , group: true},col{3: name: _stop, datatype: dateTime:RFC3339, defaultValue: , group: true},col{4: name: _time, datatype: dateTime:RFC3339, defaultValue: , group: false},col{5: name: _value, datatype: double, defaultValue: , group: false},col{6: name: _field, datatype: string, defaultValue: , group: true},col{7: name: _measurement, datatype: string, defaultValue: , group: true},col{8: name: unit, datatype: string, defaultValue: , group: true}

//row: _start:2022-08-24 04:55:23.749402 +0000 UTC,_stop:2022-08-24 05:55:23.749402 +0000 UTC,_time:2022-08-24 05:44:43.119763 +0000 UTC,_value:24.5,_field:avg,_measurement:pro1,result:_result,table:0,unit:temperature

//row: result:_result,table:0,_start:2022-08-24 04:55:23.749402 +0000 UTC,_field:avg,_measurement:pro1,unit:temperature,_stop:2022-08-24 05:55:23.749402 +0000 UTC,_time:2022-08-24 05:55:23.728327 +0000 UTC,_value:24.5

//table: col{0: name: result, datatype: string, defaultValue: _result, group: false},col{1: name: table, datatype: long, defaultValue: , group: false},col{2: name: _start, datatype: dateTime:RFC3339, defaultValue: , group: true},col{3: name: _stop, datatype: dateTime:RFC3339, defaultValue: , group: true},col{4: name: _time, datatype: dateTime:RFC3339, defaultValue: , group: false},col{5: name: _value, datatype: long, defaultValue: , group: false},col{6: name: _field, datatype: string, defaultValue: , group: true},col{7: name: _measurement, datatype: string, defaultValue: , group: true},col{8: name: unit, datatype: string, defaultValue: , group: true}

//row: _stop:2022-08-24 05:55:23.749402 +0000 UTC,_time:2022-08-24 05:44:43.119763 +0000 UTC,_value:45,_measurement:pro1,result:_result,_start:2022-08-24 04:55:23.749402 +0000 UTC,_field:max,unit:temperature,table:1

//row: _measurement:pro1,_start:2022-08-24 04:55:23.749402 +0000 UTC,_time:2022-08-24 05:55:23.728327 +0000 UTC,_stop:2022-08-24 05:55:23.749402 +0000 UTC,_value:45,_field:max,unit:temperature,result:_result,table:1

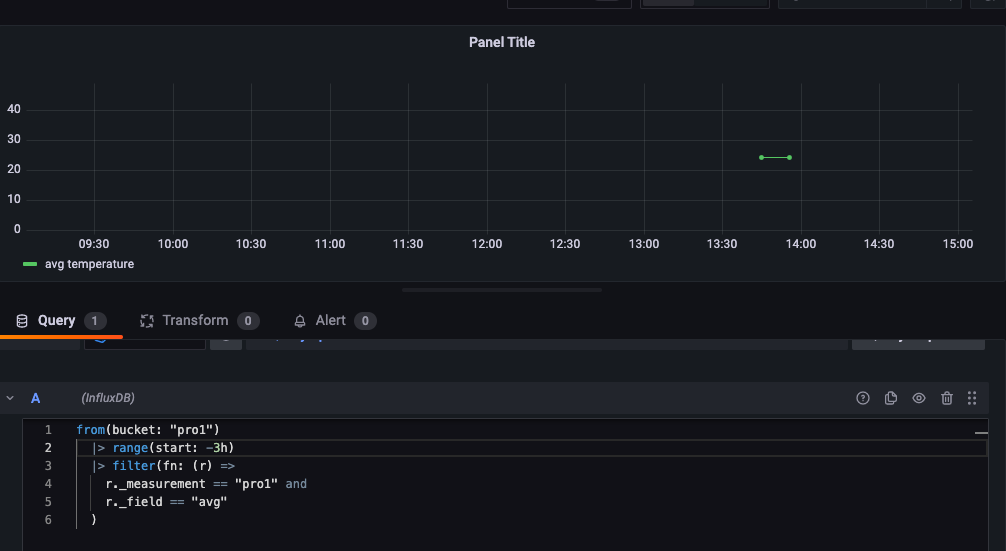

grafana

https://www.jianshu.com/p/072b9a8a3d1a

https://grafana.com/docs/

/usr/local/opt/grafana/bin/grafana-server --config /usr/local/etc/grafana/grafana.ini --homepath /usr/local/opt/grafana/share/grafana --packaging=brew cfg:default.paths.logs=/usr/local/var/log/grafana cfg:default.paths.data=/usr/local/var/lib/grafana cfg:default.paths.plugins=/usr/local/var/lib/grafana/plugins

使用influxdb2.x版本需要注意,无法使用账号密码来连接数据库,language选择flux后使用token和org来连接